本文研究Keras自帶的幾個常用的Loss Function。

1. categorical_crossentropy VS. sparse_categorical_crossentropy

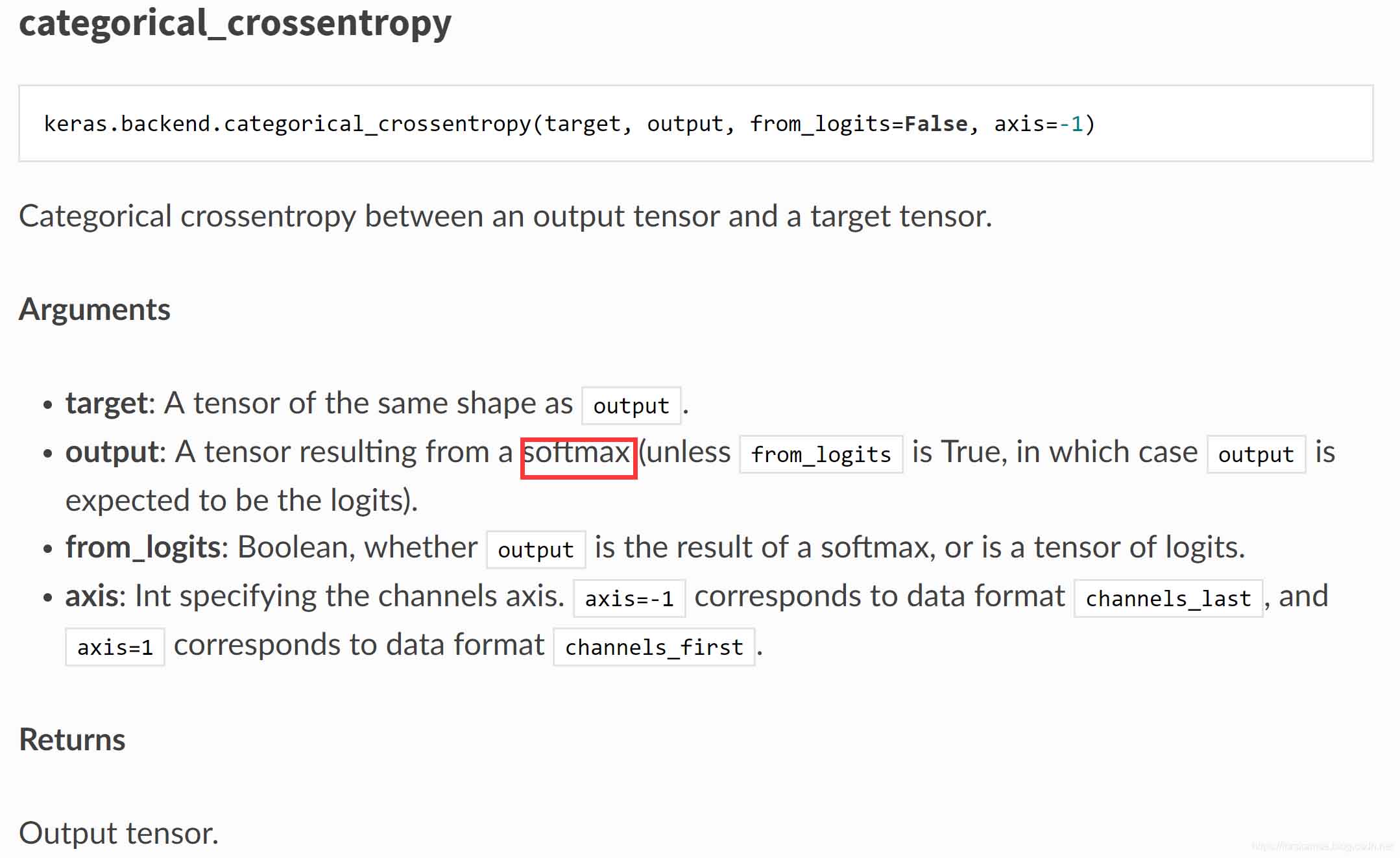

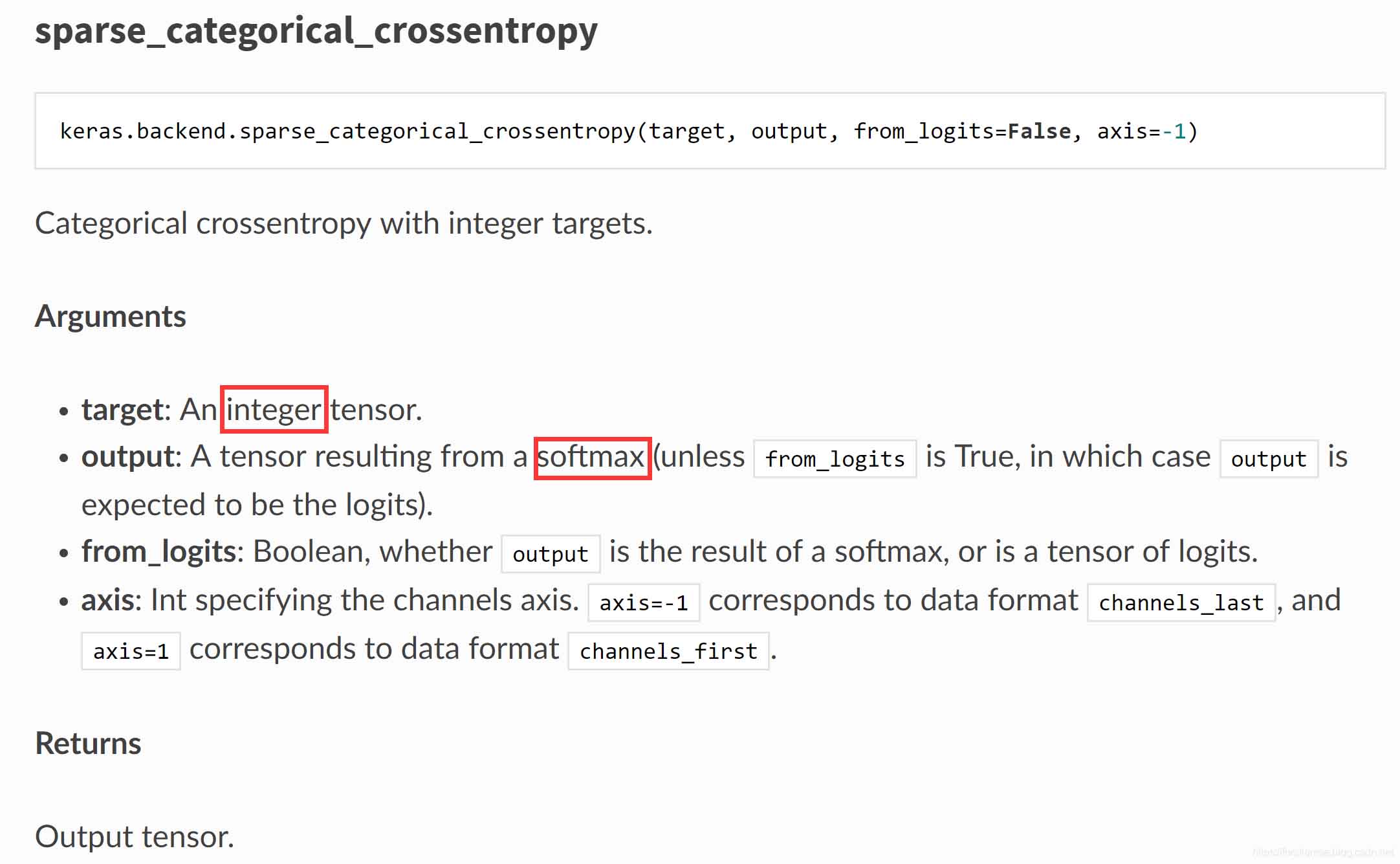

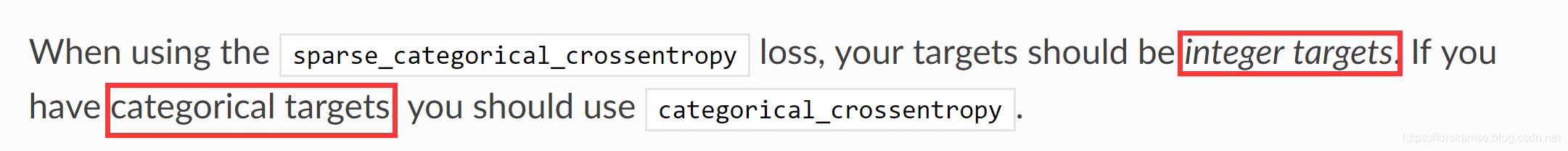

注意到二者的主要差別在于輸入是否為integer tensor�。在文檔中,我們還可以找到關于二者如何選擇的描述:

解釋一下這里的Integer target 與 Categorical target�����,實際上Integer target經(jīng)過獨熱編碼就變成了Categorical target����,舉例說明:

(類別數(shù)5)

Integer target: [1,2,4]

Categorical target: [[0. 1. 0. 0. 0.]

[0. 0. 1. 0. 0.]

[0. 0. 0. 0. 1.]]

在Keras中提供了to_categorical方法來實現(xiàn)二者的轉(zhuǎn)化:

from keras.utils import to_categorical

categorical_labels = to_categorical(int_labels, num_classes=None)

注意categorical_crossentropy和sparse_categorical_crossentropy的輸入?yún)?shù)output,都是softmax輸出的tensor���。我們都知道softmax的輸出服從多項分布���,

因此categorical_crossentropy和sparse_categorical_crossentropy應當應用于多分類問題。

我們再看看這兩個的源碼�����,來驗證一下:

https://github.com/tensorflow/tensorflow/blob/r1.13/tensorflow/python/keras/backend.py

--------------------------------------------------------------------------------------------------------------------

def categorical_crossentropy(target, output, from_logits=False, axis=-1):

"""Categorical crossentropy between an output tensor and a target tensor.

Arguments:

target: A tensor of the same shape as `output`.

output: A tensor resulting from a softmax

(unless `from_logits` is True, in which

case `output` is expected to be the logits).

from_logits: Boolean, whether `output` is the

result of a softmax, or is a tensor of logits.

axis: Int specifying the channels axis. `axis=-1` corresponds to data

format `channels_last', and `axis=1` corresponds to data format

`channels_first`.

Returns:

Output tensor.

Raises:

ValueError: if `axis` is neither -1 nor one of the axes of `output`.

"""

rank = len(output.shape)

axis = axis % rank

# Note: nn.softmax_cross_entropy_with_logits_v2

# expects logits, Keras expects probabilities.

if not from_logits:

# scale preds so that the class probas of each sample sum to 1

output = output / math_ops.reduce_sum(output, axis, True)

# manual computation of crossentropy

epsilon_ = _to_tensor(epsilon(), output.dtype.base_dtype)

output = clip_ops.clip_by_value(output, epsilon_, 1. - epsilon_)

return -math_ops.reduce_sum(target * math_ops.log(output), axis)

else:

return nn.softmax_cross_entropy_with_logits_v2(labels=target, logits=output)

--------------------------------------------------------------------------------------------------------------------

def sparse_categorical_crossentropy(target, output, from_logits=False, axis=-1):

"""Categorical crossentropy with integer targets.

Arguments:

target: An integer tensor.

output: A tensor resulting from a softmax

(unless `from_logits` is True, in which

case `output` is expected to be the logits).

from_logits: Boolean, whether `output` is the

result of a softmax, or is a tensor of logits.

axis: Int specifying the channels axis. `axis=-1` corresponds to data

format `channels_last', and `axis=1` corresponds to data format

`channels_first`.

Returns:

Output tensor.

Raises:

ValueError: if `axis` is neither -1 nor one of the axes of `output`.

"""

rank = len(output.shape)

axis = axis % rank

if axis != rank - 1:

permutation = list(range(axis)) + list(range(axis + 1, rank)) + [axis]

output = array_ops.transpose(output, perm=permutation)

# Note: nn.sparse_softmax_cross_entropy_with_logits

# expects logits, Keras expects probabilities.

if not from_logits:

epsilon_ = _to_tensor(epsilon(), output.dtype.base_dtype)

output = clip_ops.clip_by_value(output, epsilon_, 1 - epsilon_)

output = math_ops.log(output)

output_shape = output.shape

targets = cast(flatten(target), 'int64')

logits = array_ops.reshape(output, [-1, int(output_shape[-1])])

res = nn.sparse_softmax_cross_entropy_with_logits(

labels=targets, logits=logits)

if len(output_shape) >= 3:

# If our output includes timesteps or spatial dimensions we need to reshape

return array_ops.reshape(res, array_ops.shape(output)[:-1])

else:

return res

categorical_crossentropy計算交叉熵時使用的是nn.softmax_cross_entropy_with_logits_v2( labels=targets, logits=logits),而sparse_categorical_crossentropy使用的是nn.sparse_softmax_cross_entropy_with_logits( labels=targets, logits=logits)����,二者本質(zhì)并無區(qū)別,只是對輸入?yún)?shù)logits的要求不同����,v2要求的是logits與labels格式相同(即元素也是獨熱的),而sparse則要求logits的元素是個數(shù)值����,與上面Integer format和Categorical format的對比含義類似。

綜上所述�����,categorical_crossentropy和sparse_categorical_crossentropy只不過是輸入?yún)?shù)target類型上的區(qū)別�,其loss的計算在本質(zhì)上沒有區(qū)別,就是交叉熵��;二者是針對多分類(Multi-class)任務的���。

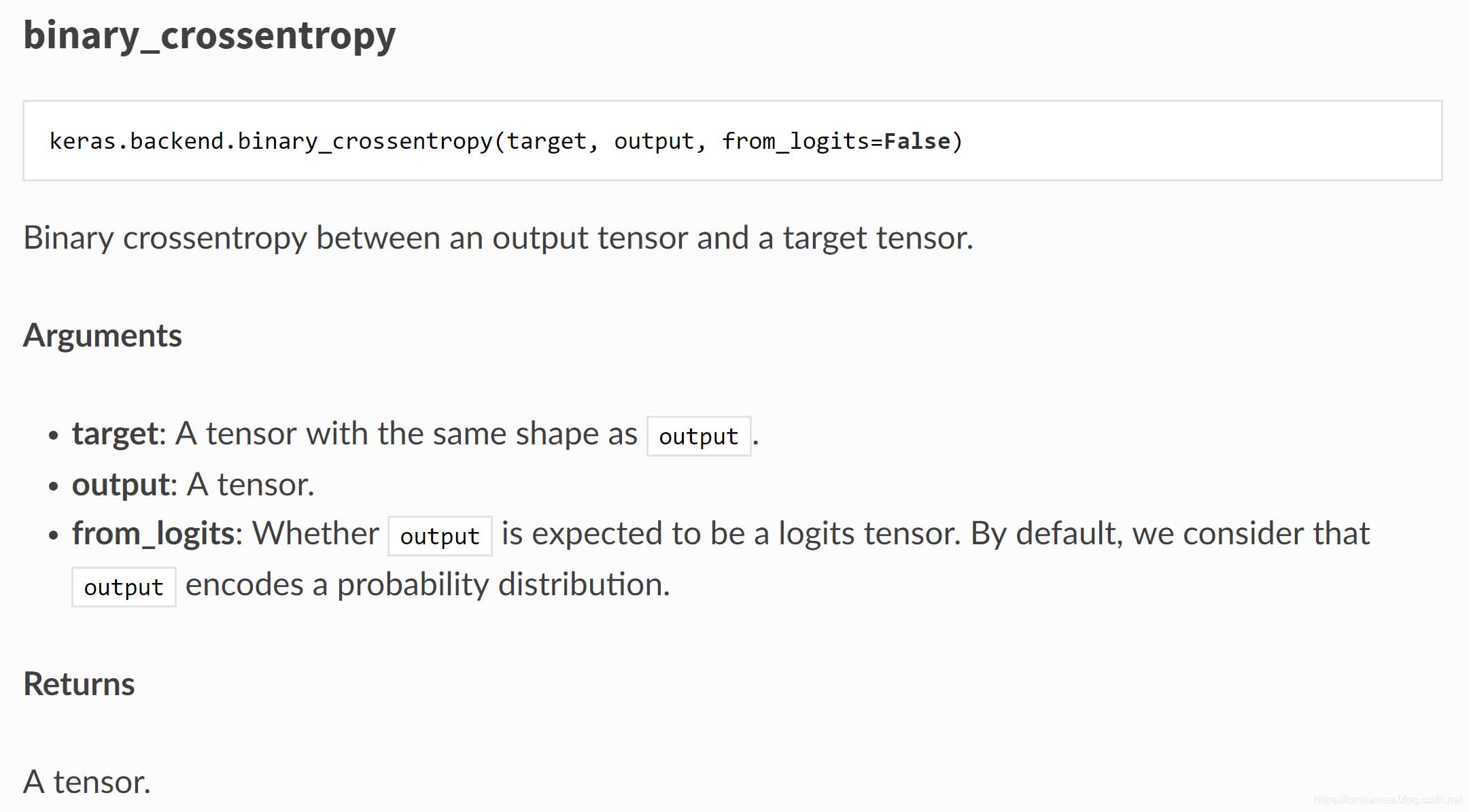

2. Binary_crossentropy

二元交叉熵,從名字中我們可以看出���,這個loss function可能是適用于二分類的�。文檔中并沒有詳細說明,那么直接看看源碼吧:

https://github.com/tensorflow/tensorflow/blob/r1.13/tensorflow/python/keras/backend.py

--------------------------------------------------------------------------------------------------------------------

def binary_crossentropy(target, output, from_logits=False):

"""Binary crossentropy between an output tensor and a target tensor.

Arguments:

target: A tensor with the same shape as `output`.

output: A tensor.

from_logits: Whether `output` is expected to be a logits tensor.

By default, we consider that `output`

encodes a probability distribution.

Returns:

A tensor.

"""

# Note: nn.sigmoid_cross_entropy_with_logits

# expects logits, Keras expects probabilities.

if not from_logits:

# transform back to logits

epsilon_ = _to_tensor(epsilon(), output.dtype.base_dtype)

output = clip_ops.clip_by_value(output, epsilon_, 1 - epsilon_)

output = math_ops.log(output / (1 - output))

return nn.sigmoid_cross_entropy_with_logits(labels=target, logits=output)

可以看到源碼中計算使用了nn.sigmoid_cross_entropy_with_logits�,熟悉tensorflow的應該比較熟悉這個損失函數(shù)了,它可以用于簡單的二分類�,也可以用于多標簽任務,而且應用廣泛���,在樣本合理的情況下(如不存在類別不均衡等問題)的情況下�,通?���?梢灾苯邮褂谩?/p>

補充:keras自定義loss function的簡單方法

首先看一下Keras中我們常用到的目標函數(shù)(如mse,mae等)是如何定義的

from keras import backend as K

def mean_squared_error(y_true, y_pred):

return K.mean(K.square(y_pred - y_true), axis=-1)

def mean_absolute_error(y_true, y_pred):

return K.mean(K.abs(y_pred - y_true), axis=-1)

def mean_absolute_percentage_error(y_true, y_pred):

diff = K.abs((y_true - y_pred) / K.clip(K.abs(y_true), K.epsilon(), np.inf))

return 100. * K.mean(diff, axis=-1)

def categorical_crossentropy(y_true, y_pred):

'''Expects a binary class matrix instead of a vector of scalar classes.

'''

return K.categorical_crossentropy(y_pred, y_true)

def sparse_categorical_crossentropy(y_true, y_pred):

'''expects an array of integer classes.

Note: labels shape must have the same number of dimensions as output shape.

If you get a shape error, add a length-1 dimension to labels.

'''

return K.sparse_categorical_crossentropy(y_pred, y_true)

def binary_crossentropy(y_true, y_pred):

return K.mean(K.binary_crossentropy(y_pred, y_true), axis=-1)

def kullback_leibler_divergence(y_true, y_pred):

y_true = K.clip(y_true, K.epsilon(), 1)

y_pred = K.clip(y_pred, K.epsilon(), 1)

return K.sum(y_true * K.log(y_true / y_pred), axis=-1)

def poisson(y_true, y_pred):

return K.mean(y_pred - y_true * K.log(y_pred + K.epsilon()), axis=-1)

def cosine_proximity(y_true, y_pred):

y_true = K.l2_normalize(y_true, axis=-1)

y_pred = K.l2_normalize(y_pred, axis=-1)

return -K.mean(y_true * y_pred, axis=-1)

所以仿照以上的方法����,可以自己定義特定任務的目標函數(shù)。比如:定義預測值與真實值的差

from keras import backend as K

def new_loss(y_true,y_pred):

return K.mean((y_pred-y_true),axis = -1)

然后�,應用你自己定義的目標函數(shù)進行編譯

from keras import backend as K

def my_loss(y_true,y_pred):

return K.mean((y_pred-y_true),axis = -1)

model.compile(optimizer=optimizers.RMSprop(lr),loss=my_loss,

metrics=['accuracy'])

以上為個人經(jīng)驗�����,希望能給大家一個參考�����,也希望大家多多支持腳本之家。

您可能感興趣的文章:- 關于keras多任務多l(xiāng)oss回傳的思考

- Keras loss函數(shù)剖析

- 解決keras GAN訓練是loss不發(fā)生變化,accuracy一直為0.5的問題

- keras中epoch,batch,loss,val_loss用法說明

- 使用keras框架cnn+ctc_loss識別不定長字符圖片操作

- keras 自定義loss層+接受輸入實例